מבוא ללמידת מכונה קוונטית

סקירה כללית ומוטיבציה

לפני שמתחילים, מלאו את סקר טרום-הקורס הקצר הזה, שחשוב לשיפור תכני הקורס וחוויית המשתמש.

Note: This survey is provided by IBM Quantum and relates to the original English content. To give feedback on doQumentation's website, translations, or code execution, please open a GitHub issue.

ברוכים הבאים ללמידת מכונה קוונטית!

הסרטון למטה יספק מבוא קצר שמשלים את הטקסט הבא.

לסיכום קצר ולהרחבת הסרטון:

- ראינו בעיה שנפתרה לראשונה על מחשב קוונטי, ולאחר מכן אנשים מצאו דרך לפתור אותה על מחשב-על קלאסי. מחזור זה של מחשוב קלאסי וקוונטי שדוחפים זה את זה לגבולות שלהם ככל הנראה ימשיך עוד מספר שנים.

- ישנן בעיות ספציפיות שבהן מחשוב קוונטי יכול להציע יתרון מוכח על מחשוב קלאסי, בהינתן התקדמות ב�תחומים כמו הפחתת שגיאות ומספר ה-Qubit הזמינים. אך זהו עדיין זמן של חקירה, בחיפוש אחר מערכי נתונים מתאימים לקוונטום ומפות מאפיינים קוונטיות שימושיות.

- למידת מכונה קוונטית (QML) היא אחד מתחומי הריגוש הרבים שבהם מחשוב קוונטי יכול להעצים או להשלים תהליכי עבודה קלאסיים קיימים.

למידת מכונה (ML) מיישמת אלגוריתמים על מערכי נתונים, ולכן QML עשויה כנראה לכלול מכניקת קוונטום בצד הנתונים, בצד האלגוריתמים, או בשניהם. כל האפשרויות הללו הן בעלות עניין פוטנציאלי. אך נגביל את עצמנו בעיקר לדיונים על אלגוריתמים קוונטיים המיושמים על נתונים קלאסיים. אחת הסיבות לכך היא שבעיות ML עם נתונים קלאסיים כבר נחקרו כל כך טוב ונמצאות בשימוש נרחב. יש עניין רחב בפתרון בעיות שמתחילות בנתונים קלאסיים. סיבה נוספת היא היעדר QRAM. ללא היכולת לאחסן כמויות גדולות של נתונים קוונטיים על פני קנה מידה זמן ארוך יחסית, שיטות שמתחילות בנתונים קוונטיים עדיין רחוקות למדי מיישומיות לתעשייה. גם לא ברור כיצד "לגשת קוונטית" לנתונים קלאסיים באופן יעיל. שני סוגי ML בעלי עניין מיוחד הם למידה מפוקחת, שבה אתם מאמנים אלגוריתם תוך שימוש במערך נתונים מתויג, ולמידה לא-מפוקחת, שבה האלגוריתם מנסה ללמוד על התפלגות מדגימות לא מתויגות. אלגוריתם לא-מפוקח עשוי, למשל, ללמוד כיצד לייצר דגימות חדשות מאותה התפלגות, או כיצד לאגד את הדגימות לקבוצות עם מאפיינים דומים.

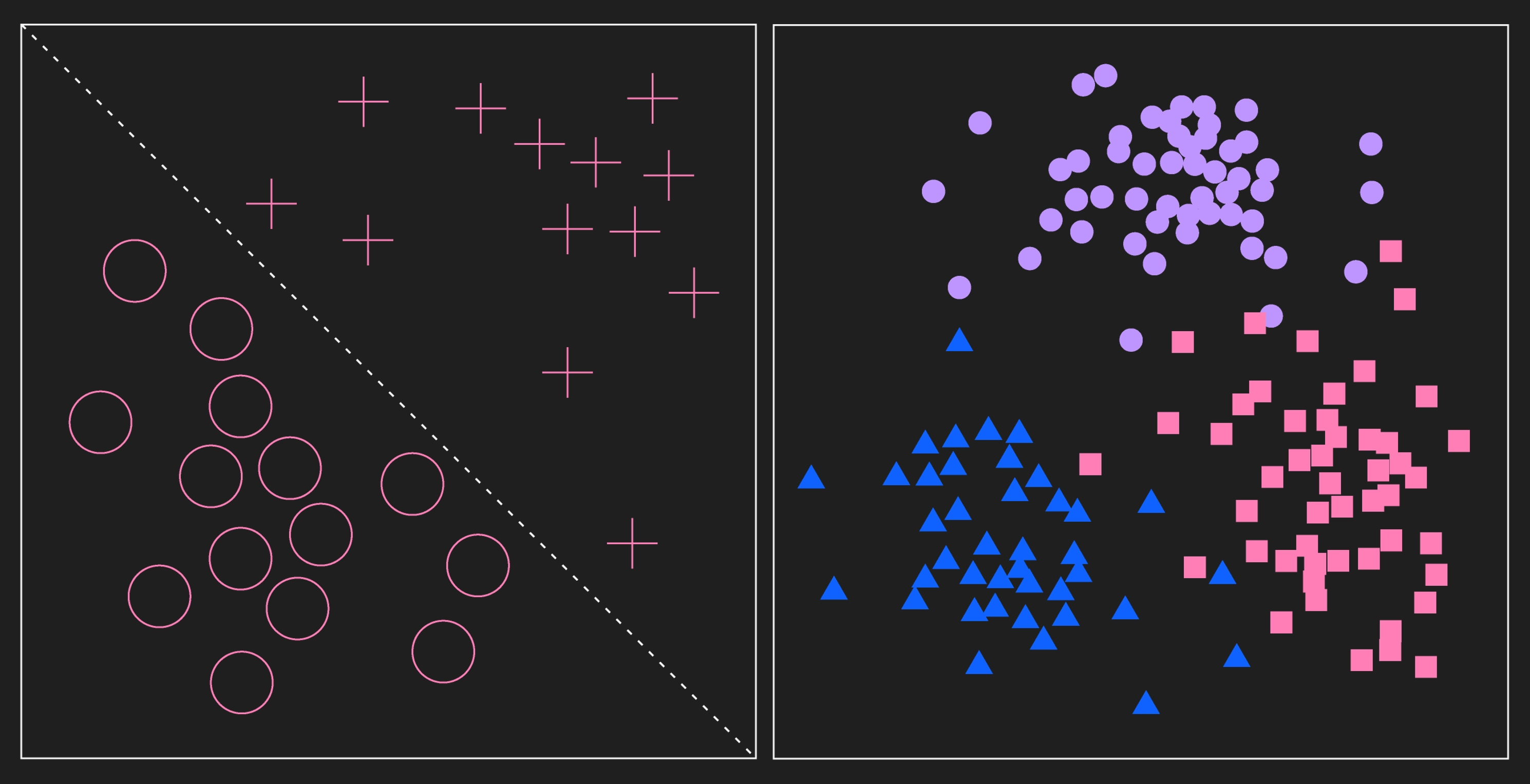

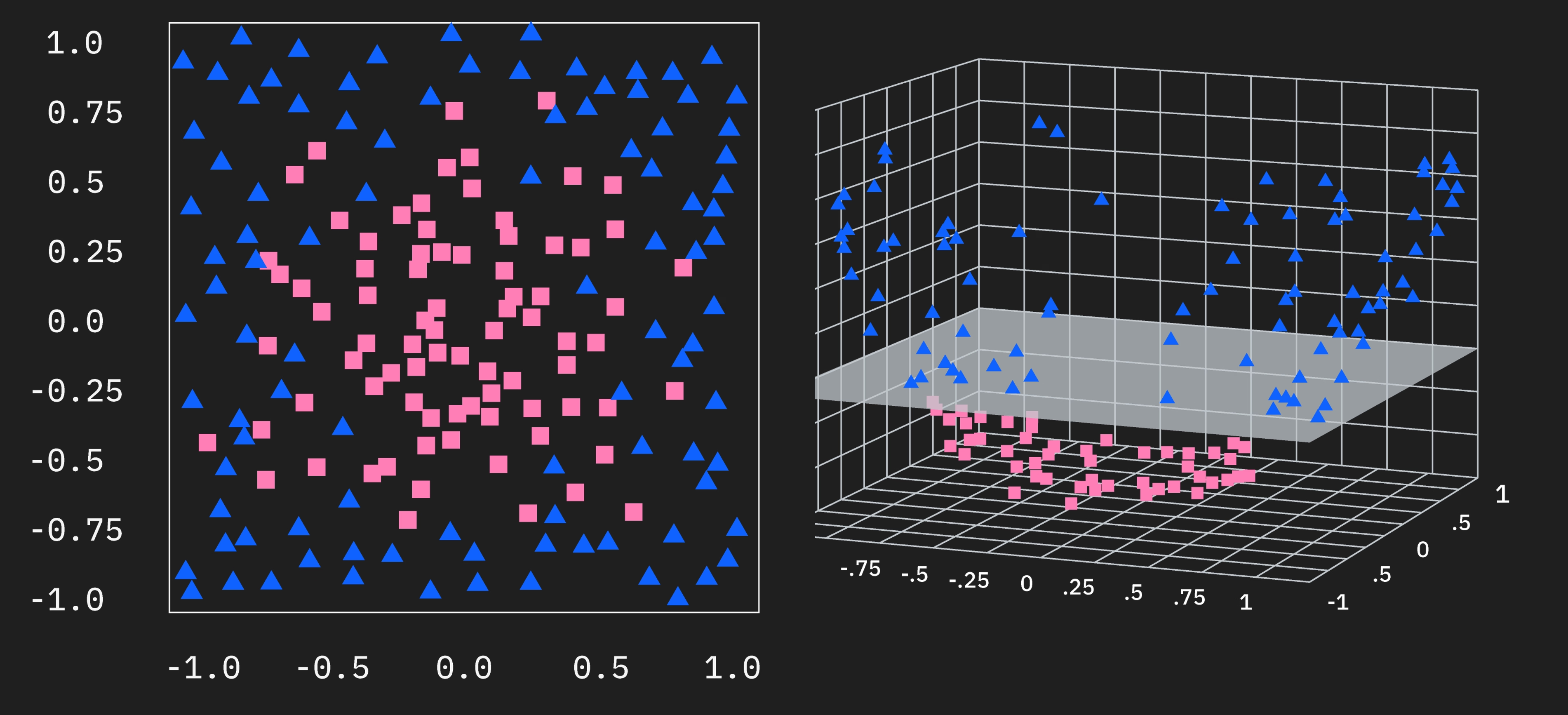

התמונה השמאלית מציגה שתי קטגוריות של נתונים מתויגים כמו בלמידה מפוקחת. במקרה זה, הקטגוריות ניתנות להפרדה לינארית. התמונה הימנית מציגה אשכולות של נתונים. במשימת למידה לא-מפוקחת, נתונים אלו לא יהיו מתויגים בתחילה והאלגוריתם יחקור את ההתפלגות, אולי מחפש אשכולות. לצורך הדמיה של האשכולות שהאלגוריתם עשוי לזהות, נקודות הנתונים תויגו כעת. הבדל מרכזי בין השניים הוא שתהליך הלמידה המפוקחת מתחיל עם נתונים מתויגים מראש ותהליך הלמידה הלא-מפוקחת מתחיל עם נתונים לא מתויגים, גם אם הנתונים מתויגים בסוף.

מי שיש לו רקע בלמידת מכונה כבר יודע שרבות משיטות הפתרון כוללות מיפוי נתונים למרחבים ממדיים גבוהים יותר. זה נחקר במיוחד בהקשר של גרעינים. כתזכורת קצרה, לפעמים ניתן להפריד נתונים לקטגוריות באמצעות קו, מישור, או היפר-מישור (נאמר לעתים קרובות פשוט "היפר-מישור" לתמציתיות), באותו מספר ממדים שבהם הנתונים ניתנים. זה מוצג בתמונה הראשונה למעלה. פעמים אחרות, ייתכן שלא ניתן להפריד נתונים באמצעות היפר-מישור בממדים אלו, כפי שמוצג בתמונה השנייה. אך עדיין יכולה להיות מבנה בנתונים שניתן לנצל במיפוי לממדים גבוהים יותר, שאז מאפשר הפרדת הנתונים במרחב הממדי הגבוה יותר. זה ממחיש את המיפוי של נתוני 2D עם סימטריה מעגלית למרחב 3D שבו נקודות הנתונים מסודרות לאורך משטח פרבולואיד.

מטרה נפוצה ב-QML היא למצוא מיפוי מקבוצת המאפיינים הממדית הנמוכה יותר למרחב ממדי גבוה יותר, שמפריד ביעילות את נקודות הנתונים שלנו כדי שנוכל להשתמש במיפוי לסיווג נקודות נתונים חדשות. אך זו אינה משימה קלה, וכל דיון על השימושיות הפוטנציאלית של מחשוב קוונטי בלמידת מכונה חייב להיות מלווה בהסתייגויות המתאימות. בפרט, עלינו לטפל בניואנס שבבחירת מערכי נתונים ובאתגרים בהשגת קנה מידה שימושי. עלינו גם לסטות מניסיון לעלות על אלגוריתמי ML קלאסיים על נתונים שכבר מטופלים ביעילות ובצורה טובה על ידי אלגוריתמים קלאסיים, ולהפנות את הדיון לחקירת מפות מאפיינים חדשות שעשויות להיות שימושיות.

ניהול ציפיות

מערכי נתונים רבים המשמשים ביישומי QML המתוארים בספרות הם "מהונדסי מאפיינים", כלומר מערך נתונים נבחר או נוצר ספצי�פית להצגת מקרה שימוש צר שבו מחשוב קוונטי שימושי. אם זה נשמע כמו רמייה אז אנחנו מבינים לא נכון את המשימה הנמצאת בפנינו. לא נכון שמפות מאפיינים קוונטיות מסוימות מאפשרות לנו לפתור את כל משימות הסיווג או רבות מהן ביעילות רבה יותר או בסקלאביליות רבה יותר מאלגוריתמי למידת מכונה קלאסיים. במקום זאת, מפות מאפיינים קוונטיות מסוימות (לא כולן) מתנהגות שונה ממפות מאפיינים קלאסיות. המשימה הנמצאת בפנינו היא אז לחקור מעגלים קוונטיים בהקשר של מבני נתונים מורכבים. כמה שאלות ספציפיות לטפל בהן הן:

- אילו מעגלים קוונטיים הם בעלי הסבירות הגבוהה ביותר להתנהג בדרכים חדשניות, בהשוואה לחלופות קלאסיות?

- האם ישנן בעיות בעולם האמיתי הכוללות נתונים עם תכונות שנחקרות בצורה הטובה ביותר תוך שימוש במעגלים קוונטיים חדשניים כאלה?

- האם מעגלים קוונטיים אלה מתרחבים על מחשבים קוונטיים בטווח קצר?

הסבר לא מספיק

לעתים קרובות נתקלים בהסבר פשטני של כיצד מחשוב קוונטי יכול להיות עוצמתי. הוא נשמע בערך כך:

בדיוק כמו שמחשבים קלאסיים משתמשים בסיביות מידע, מחשבים קוונטיים משתמשים ב-Qubit. בהינתן מספר סיביות, נגיד 4, מחשב קלאסי יכול לנקוט כל אחד מ- מצבים אפשריים, בעוד שמחשב קוונטי יכול להתקיים בסופרפוזיציה של כל 16 המצבים בו-זמנית, וניתן לבצע פעולות על כל הסופרפוזיציה הזו. במקרים מסוימים, זה מאפשר לנו באופן טבעי לעצב אלגוריתמי למידה מעניינים פוטנציאליים המבוססים על מיפויים למרחבים ממדיים גבוהים יותר.

זהו אמירה נכונה, אך היא אינה מספקת, ומעט מטעה כפי שנסביר. גם ניתן לראות שהדגשים בין מקדמים מרוכבים לממשיים מודגשים, כמו:

מערכת קלאסית הסתברותית שבה ניתן לתאר מערכת כבעלת הסתברויות מסוימות להיות במצבים שונים, ניתן לתאר�ה כדלקמן.

במערכת כזו, המקדמים , , וכן הלאה יכולים להיות בעלי משמעות רק אם הם מספרים ממשיים חיוביים. המצבים במחשבים קוונטיים מתוארים על ידי אמפליטודות הסתברות שיכולות להיות מספרים מרוכבים.

האמירות לעיל נוסחו בזהירות רבה כך שהן נכונות (אמירות רבות הדומות להן ברכיתן שגויות). אך האמירות הנכונות הללו אינן הסבר לעוצמת המחשוב הקוונטי בלמידת מכונה. ראשית, כל יישום של מחשוב קוונטי ללמידת מכונה יכלול מדידות ואיננו יכולים למדוד Qubit להיות במספר מצבים בו-זמנית. ניתן להכין Qubit בסופרפוזיציה כמו אך מדידה תניב או . אז כמינימום, הסיפור הזה על הגדלת ממדיות אינו שלם. יתר על כן, בהקשר של גרעינים, ממדיות מוגברת במחשוב קוונטי אינה יכולה להיות תנאי מספיק לכוח חישובי מעל חלופות קלאסיות, מכיוון שגרעינים גאוסיים הם אינסוף ממדיים. ישנם ניואנסים שם, בכך שמפות מאפיינים גאוסיות משמשות רק בשילוב עם "טריק הגרעין" שמתחמק מהצורך לחשב פעם וקטור ממופה אינסוף-ממדי. אך הנקודה נשארת:

ממדיות גבוהה של מצבים קוונטיים שזורים אינה מקביליות אקספוננציאלית, ואינה תנאי מספיק לעוצמה מוגברת בלמידת מכונה.

בשיעורים שיבואו, אנחנו מציגים תהליכי עבודה לשילוב מעגלים קוונטיים במשימות למידת מכונה, ואנחנו עושים זאת לצורך מפורש של הנחת החקירה של עוצמת המחשוב הקוונטי. אף מפת מאפיינים או אלגוריתם בקורס זה אינם מוצגים כנתיב מהיר לתוצאות למידת מכונ�ה טובות יותר לבעיות כלליות, כי אין מפת מאפיינים או אלגוריתם כזה. במקום זאת, אנחנו מציגים מגוון רחב של כלים קוונטיים לשימוש בחקירת מחשוב קוונטי שימושי.

דה-קוונטיזציה

דה-קוונטיזציה מתייחסת להחלפה של אלגוריתם קוונטי נתון באלגוריתם קלאסי שמבצע בצורה דומה לאלגוריתם קוונטי עבור קבוצת משימות נתונה, בדרך כלל כולל קנה מידה. על פי הגדרות מסוימות, האלגוריתם הקלאסי צריך לבצע לאט יותר רק בסדר פולינומי מהאלגוריתם הקוונטי.

כמה אלגוריתמי למידת מכונה קוונטית (QML) שנחשב ראשית שהם מספקים יתרונות מהירות משמעותיים על פני אלגוריתמים קלאסיים עברו דה-קוונטיזציה בשנים האחרונות. תהליך הדה-קוונטיזציה הזה הוביל לתובנות חשובות לגבי היתרונות הפוטנציאליים והמגבלות של גישות קוונטיות ללמידת מכונה.

אחד מתוצאות הדה-קוונטיזציה הבולטות ביותר הגיעה מעבודתו של Ewin Tang על מערכות המלצות. Tang גילה אלגוריתם קלאסי שיכול לבצע משימות המלצה במהירויות שנחשבו בעבר ניתנות להשגה רק על ידי מחשבים קוונטיים. גילוי זה אתגר את ההנחה שלאלגוריתמים קוונטיים יש יתרון אקספוננציאלי לבעיה זו. עבודות יותר עדכניות של Shin et al. התמקדו בזיהוי תנאים על יכולת הדה-קוונטיזציה של מחלקת הפונקציות של מודל למידת מכונה קוונטית וריאציוני.

גישה נפוצה אחת לדה-קוונטיזציה (אם כי לא הטריק היחיד) היא דרך שיקול של עלות טעינת הנתונים. כלומר, כל אלגוריתם קוונטי המיושם על נתונים קלאסיים יכלול שלב שבו נתונים קלאסיים מקודדים למחשב הקוונטי. אם אלגוריתם קוונטי מניח נקודת התחלה שבה נתונים קוונטיים כבר זמינים, אז בעצם מסתירים את הזמן הנדרש לקידוד. ישנם הקשרים שבהם הנחת נתונים קוונטיים עשויה להיות סבירה, אך יישומים רבים בעלי עניין יתחילו בנתונים קלאסיים. מקרים מסוימים של דה-קוונטיזציה הראו שכאשר זמן הקידוד הזה כלול, וכאשר טעינת נתונים קלאסיים יכולה להתבצע ביעילות, האלגוריתם הקוונטי כבר לא עולה על עמיתו הקלאסי.

גם אם לא ניתן לבצע דה-קוונטיזציה לאלגוריתם, זה לא אומר שהוא יעיל יותר או סקאלבילי יותר מכל האלגוריתמים הקלאסיים. כדוגמה קיצונית ומלאכותית: דמיינו אלגוריתם לבחירת j האיברים הגדולים ביותר מקבוצה בגודל k. ניתן לכתוב אלגוריתם קוונטי שמשתמש באלגוריתם של Shor לפרק כל אחד מ-k האיברים לגורמים ראשוניים, ולאחר מכן לקבוע את האיברים הגדולים ביותר תוך שימוש בגורמים הראשוניים. לאלגוריתם כזה ככל הנראה לא ניתן לבצע דה-קוונטיזציה, אך הוא פחות יעיל בהרבה מאלגוריתמים קלאסיים לאותה בחירה של איברים גדולים (אם כי לא חלק הפירוק לגורמים המיותר).

הוכחת קיום

ב-2021, חוקרי IBM Quantum® Yunchao Liu, Srinivasan Arunachalam, ו-Kristan Temme פרסמו מאמר ב-Nature, יתרון מהירות קוונטי מחמיר ואמין בלמידת מכונה מפוקחת. בהתאם להסתייגויות לעיל, בעיית סיווג נבחרה בקפידה לעבודה זו שהיא (1) ידועה כקשה קלאסית, ו-(2) מתאימה לאלגוריתמים קוונטיים להציג יתרון מהירות.

המאמר עוסק בסיווג נתונים המבוסס על לוגריתמים דיסקרטיים. לצטט את המאמר, "עבור מספר ראשוני גדול ומחולל של , זוהי השערה מקובלת שאין אלגוריתם קלאסי שיכול לחשב על קלט , בזמן פולינומי ב-, מספר הסיביות הנדרשות לייצוג ." לעומת זאת, אלגוריתם Shor ידוע כפותר את בעיית הלוג הדיסקרטי בזמן פולינומי. בחירת הבעיות מספקת בו-זמנית את שני הקריטריונים לעיל: קשיות קלאסית (לא סביר שתעבור דה-קוונטיזציה), וידועה כמתאימה לאלגוריתמים קוונטיים.

באמצעות הבחירה השיקולית הזו של בעיית הסיווג, המחברים הצליחו להראות יתרון מהירות אקספוננציאלי תוך שימוש בשיטות גרעין קוונטי (מוצג בקצרה להלן ונדון בשיעורים מאוחרים יותר) שהוא גם קצה-לקצה וגם אמין. כאן, "קצה-לקצה" מתייחס להנחות לגבי תחילה בנתונים קלאסיים; המחברים כן כוללים את הזמן לקידוד הנתונים. כאן, "אמין" מתייחס לעובדה שהנתונים לסיווג מופרדים במרווח רחב תוך שימוש באלגוריתם הקוונטי, כך שהצלחת הסיווג עמידה בפני שיקולים מהעולם האמיתי כמו שגיאת דגימה סופית.

כל זאת כדי לומר שישנן בעיות שבהן גרעינים קוונטיים יכולים להניב יתרון מהירות אקספוננציאלי. אך המצב הנוכחי של המדע הוא שבעיות כאלה נבחרות על סמך תצפיות או הצדקה תיאורטית שהן צריכות להיות מתאימות לאלגוריתמים קוונטיים. לא ריאלי לצפות ליתרון מהירות קוונטי למשימות למידת מכונה שמחשבים קלאסיים כבר מבצעים ביעילות רבה.

זיהוי מקרים אידיאליים כאלה לחקירת שימושיות קוונטית היא אחריות עצומה ללומדים בקורס זה. וזו אינה משימה שניתן לבצע בקורס כזה. אותה חקירה היא משימה עבור רשת IBM Quantum כולה, המורכבת מחוקרים כמוכם. קורס זה ידגים תהליכי עבודה של QML ואסטרטגיות קידוד כדי שתוכלו להתחיל לחקור שימושיות קוונטית בתחום המומחיות שלכם.

אנחנו מקווים שמבוא זה הבהיר מספר דברים לגבי למידת מכונה קוונטית:

- אלגוריתמים קוונטיים יכולים להציע יתרון מהירות אקספוננציאלי על אלגוריתמים קלאסיים לבעיות ספציפיות מאוד שקשות קלאסית ומתאימות לאלגוריתמים קוונטיים.

- ממדיות גבוהה של מצבים שזורים במחשוב קוונטי חשובה, אך אינה מספיקה להשגת יתרון על אלגוריתמים קלאסיים.

- מציאת בעיות המתאימות לאלגוריתמים קוונטיים היא משימה קשה ביותר, ואחת שתפול ברובה על הלומדים בקורס זה.

שאלות בדיקה

מה מבדיל מצבים קוונטיים ממצבים קלאסיים?

תשובה:

הרבה דברים. בעיקר: מקדמים מרוכבים וסופרפוזיציה עם עותק יחיד. ישנם הבדלים רבים נוספים שייושנו בשיעורים עתידיים, כולל שזירה והפרעה.

נכון או לא נכון? מצבים קוונטיים שזורים מאוד מאפשרים לנו לפתור את רוב בעיות למידת המכונה ביעילות רבה יותר על מחשב קוונטי.

תשובה:

לא נכון. רוב בעיות למידת המכונה נפתרות ביעילות רבה על ידי אלגוריתמים קלאסיים ואלגוריתמים קוונטיים לא צפויים להציע יתרון מהירות משמעותי. המטרה ב-QML היא למצוא מערכי נתונים עם מאפיינים המתוארים היטב על ידי מצבים קוונטיים ו/או למצוא מיפויים של מאפייני נתונים שממטבים את דיוק המודלים.

מטרות למידה של הקורס

לאחר השלמת קורס זה, תוכלו לצפות לבנות את הכישורים והמיומנויות הבסיסיות הבאות. לומדים יוכלו:

-

להסביר מהי QML ואיפה קוונטום מתחבר ללמידת מכונה קלאסית.

-

להחיל מונחון קוונטי ומונחות מפתח על תהליכי עבודה של ML.

-

לזהות מרכיבי מפתח של תהליך עבודה של QML (סוגים שונים).

-

לזהות סוגים שונים של QML ולהבחין ביניהם.

-

לממש שיטות גרעין קוונטי ומסווגים קוונטיים וריאציוניים תוך שימוש ב-Qiskit Runtime primitives ובעקבות דפוסי Qiskit.

-

לזהות היכן QML מבטיחה ביותר והיכן לא.

-

להתאים בעיה לדוגמה למערך הנתונים שלהם.

-

להיות מודעים לבעיות ב-QML כמו זמן אימון, רעש ושגיאה מצטברת בקריאות מצב מרובות.

-

להמליץ היכן QML עשויה להועיל לארגון שלהם.

מבנה הקורס

קורס זה מורכב ממספר שיעורים. כל שיעור כולל מספר שאלות בדיקה לאורך הטקסט, כך שתוכלו לתרגל כישורים חדשים או לבדוק את הבנתכם תוך כדי. אלו אינן חובה.

בסוף הקורס, יש חידון של 20 פריטים. עליכם לקבל ציון של לפחות 70% בחידון זה כדי לקבל את התג של למידת מכונה קוונטית שלכם, דרך Credly. אם תקבלו לפחות 70%, התג שלכם יישלח אוטומטית בדוא"ל אליכם, זמן קצר לאחר מכן. ניתן להגיש את החידון פעמיים בלבד. לאחר ההגשה הראשונה, תקבלו הזדמנות לנסות שוב את השאלות שפספסתם. לאחר ההגשה השנייה, הציון שלכם סופי. ראו את החידון לפרטים נוספים.

מבנה הקורס הוא כדלקמן:

- שיעור 1: מבוא וסקירה כללית

- שיעור 2: סקירה חוזרת של למידת מכונה

- שיעור 3: קידוד נתונים

- שיעור 4: שיטות גרעין קוונטי ומכונות וקטורים תומכים

- שיעור 5: מסווגים קוונטיים וריאציוניים / רשתות עצביות

- בחינה לתג

הריצו את קוד QML הראשון שלכם

לעתים קרובות מועיל לראות לאן אנחנו הולכים, לפני שמפרקים לחלקים ומתעמקים ברקע. תאי הקוד למטה מבצעים מופע פשוט של שיטת גרעין קוונטי. ספציפית, מחושב איבר מטריצת גרעין יחיד. משתמשים חדשים לשיטות גרעין או לגרעינים קוונטיים אל יירתעו מכך; שיעורים מרובים בקורס זה יוקדשו לפירוק בדיוק מה שנעשה בתאים הללו.

בקוד זה אנחנו מציגים בו-זמנית את דפוסי Qiskit: מסגרת לגישה למחשוב קוונטי בקנה מידה שימושי. מסגרת זו מורכבת מארבעה שלבים כלליים מאוד שניתן ליישם על רוב הבעיות (אם כי בחלק מתהליכי העבודה, שלבים מסוימים עשויים לחזור מספר פעמים).

דפוסי Qiskit:

- שלב 1: מיפוי קלטים קלאסיים לבעיה קוונטית

- שלב 2: אופטימיזציה של הבעיה לביצוע קוונטי

- שלב 3: ביצוע תוך שימוש ב-Qiskit Runtime Primitives

- שלב 4: ניתוח / עיבוד לאחר

בתאים למטה, אנחנו מציעים הסברים חלקיים בלבד של השלבים השונים, רק כדי שתוכלו למצוא את השיעור המתאים ללמוד עוד.

# Added by doQumentation — required packages for this notebook

!pip install -q numpy pandas qiskit

# Import some qiskit packages required for setting up our quantum circuits.

from qiskit.circuit import Parameter, ParameterVector, QuantumCircuit

from qiskit.circuit.library import unitary_overlap

# Import StatevectorSampler as our sampler.

from qiskit.primitives import StatevectorSampler

# Step 1: Map classical inputs to a quantum problem:

# Start by getting some appropriate data. The data imported below consist of 128 rows or data points.

# Each row has 14 columns that correspond to data features, and a 15th column with a label (+/-1).

!wget https://raw.githubusercontent.com/qiskit-community/prototype-quantum-kernel-training/main/data/dataset_graph7.csv

# Import some required packages, and write a function to pull some training data out of the csv file you got above.

import pandas as pd

import numpy as np

def get_training_data():

"""Read the training data."""

df = pd.read_csv("dataset_graph7.csv", sep=",", header=None)

training_data = df.values[:20, :]

ind = np.argsort(training_data[:, -1])

X_train = training_data[ind][:, :-1]

return X_train

# Prepare training data

X_train = get_training_data()

# Empty kernel matrix

num_samples = np.shape(X_train)[0]

# Prepare feature map for computing overlap between two data points.

# This could be pre-built feature maps like ZZFeatureMap, or a custom quantum circuit, as shown here.

num_features = np.shape(X_train)[1]

num_qubits = int(num_features / 2)

entangler_map = [[0, 2], [3, 4], [2, 5], [1, 4], [2, 3], [4, 6]]

fm = QuantumCircuit(num_qubits)

training_param = Parameter("θ")

feature_params = ParameterVector("x", num_qubits * 2)

fm.ry(training_param, fm.qubits)

for cz in entangler_map:

fm.cz(cz[0], cz[1])

for i in range(num_qubits):

fm.rz(-2 * feature_params[2 * i + 1], i)

fm.rx(-2 * feature_params[2 * i], i)

# Pick two data points, here 14 and 19, and assign the features to the circuits as parameters.

x1 = 14

x2 = 19

unitary1 = fm.assign_parameters(list(X_train[x1]) + [np.pi / 2])

unitary2 = fm.assign_parameters(list(X_train[x2]) + [np.pi / 2])

# Create the overlap circuit

overlap_circ = unitary_overlap(unitary1, unitary2)

overlap_circ.measure_all()

overlap_circ.draw("mpl", scale=0.6, style="iqp")

# Step 2: Optimize problem for quantum execution

# Use Qiskit Runtime service to get the least busy backend for running on real quantum computers.

# from qiskit_ibm_runtime import QiskitRuntimeService

# service = QiskitRuntimeService(channel="ibm_quantum")

# backend = service.least_busy(

# operational=True, simulator=False, min_num_qubits=overlap_circ.num_qubits

# )

# Transpile the circuits optimally for the chosen backend using a pass manager.

# from qiskit.transpiler.preset_passmanagers import generate_preset_pass_manager

# pm = generate_preset_pass_manager(optimization_level=3, backend=backend)

# overlap_ibm = pm.run(overlap_circ)

# Step 3: Execute using Qiskit Runtime Primitives

# Specify the number of shots to use.

num_shots = 10_000

## Evaluate the problem using statevector-based primitives from Qiskit

sampler = StatevectorSampler()

counts = (

sampler.run([overlap_circ], shots=num_shots).result()[0].data.meas.get_int_counts()

)

# Step 4: Analyze and post-processing

# Find the probability of 0.

counts.get(0, 0.0) / num_shots

--2025-05-09 10:04:28-- https://raw.githubusercontent.com/qiskit-community/prototype-quantum-kernel-training/main/data/dataset_graph7.csv

Resolving raw.githubusercontent.com (raw.githubusercontent.com)... 185.199.110.133, 185.199.109.133, 185.199.108.133, ...

Connecting to raw.githubusercontent.com (raw.githubusercontent.com)|185.199.110.133|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 49405 (48K) [text/plain]

Saving to: ‘dataset_graph7.csv.2’

dataset_graph7.csv. 100%[===================>] 48.25K --.-KB/s in 0.03s

2025-05-09 10:04:29 (1.37 MB/s) - ‘dataset_graph7.csv.2’ saved [49405/49405]

0.8199

למרות שאינכם צריכים להבין את כל השלבים לעיל, עלינו לנסות להבין את הפלט, כדי שנדע מדוע אנחנו עושים זאת. תהליכים רבים בלמידת מכונה משתמשים במכפלות פנימיות כחלק מסיווג בינארי (בין השאר). למכניקת הקוונטים יש קשר ברור לכך, מכיוון שהסתברויות מדידת מצבים שונים ניתנות על ידי המכפלה הפנימית עם מצב התחלתי דרך המכפלה הפנימית: . אז מה שעשינו לעיל הוא יצרנו Circuit קוונטי המכיל את המאפיינים של שתי נקודות הנתונים שלנו, וממפה אותם למרחב של וקטור קוונטי, ואז מעריך את המכפלה הפנימית במרחב זה דרך ביצוע מדידות. זוהי דוגמה לאמדן גרעין קוונטי. שימו לב שמימשנו תהליך זה רק עבור שתיים מנקודות הנתונים (ה-14 וה-19). אם היינו עושים זאת עבור כל הזוגות האפשריים, יכולנו לקחת את הפלט (במקרה זה המספר 0.821...) ולאכלס מטריצה של תוצאות המתארת את החפיפה בין כל הנקודות במערך הנתונים לאימון. זוהי "מטריצת הגרעין".

בדקו את הבנתכם

קראו את השאלה למטה, חשבו על תשובתכם, ואז לחצו על המ�שולש לגילוי הפתרון.

בתהליך לעיל, חישבנו איבר מטריצת גרעין עבור נקודות הנתונים ה-14 וה-19. איזה ערך אנחנו צריכים לקבל אם נשתמש באותה נקודת נתונים פעמיים, כאן (כמו ה-14 וה-14 שוב)? במילים אחרות, מהם צריכים להיות האיברים האלכסוניים במטריצת הגרעין? ענו על שאלה זו בהיעדר רעש, אך שימו לב שסטיות מתשובתכם אפשריות בנוכחות רעש.

תשובה:

האלכסונים צריכים להיות 1.0. תהליך זה צריך לחשב את המכפלה הפנימית המנורמלת של וקטור עם עצמו, שחייבת תמיד להיות אחד.